Ignorant, Irresponsible, and Privileged: A Critique of the CCCC Chair's Message

I couldn't believe what I was witnessing, but then again, I could.

I just witnessed a moment in educational history that will likely not age well. I attended the Chair’s address at the Conference in Composition and College Communication (CCCC) this morning, and it wasn’t pretty.

The CCCC is an international professional organization associated with the National Council of Teachers of English (NCTE). The CCCC started in 1949 as a small conference of freshman composition instructors under the auspices of the NCTE, and it grew into the world’s largest organization dedicated to teaching writing. This conference is their vanguard, their golden jewel in the crown of composition. If you are a college composition professor and you want to be noticed, you get your paper into the CCCC journal or present at the CCCC conference—then you know you have arrived.

So, naturally, as college professors who teach writing, Eugenia and I wanted to present at CCCC. It was a sort of dream for us. Our research paper on how Generative AI supports critical thinking didn’t make it in, but our workshop did. On Wednesday, we taught a workshop on how to teach with AI. It was eight hours long, and we barely scraped the surface of what we know. Eugenia was unable to attend in person, but she presented virtually while I presented in the room. We did what we believed to be a very successful workshop for a group of composition instructors who wanted to learn what to do to integrate AI into their writing courses.

This morning, alone and desperately searching for anything to do besides grade more papers, I wandered into the Chair’s address to the CCCC. I didn’t know what to expect when Jennifer Sano-Franchini stood at the podium and put up her first slide that promised her talk wasn’t about AI. People chuckled. It was a cute start, and I told myself that it was just a little professional humor despite being somewhat derisive of what I do for a living. I waited for her to talk about something else, but her first slide was a lie: the whole speech was about Generative AI. I sat there in full simmering anger as the Chair of CCCC told hundreds of writing instructors that Generative AI was the enemy.

In her screed against Generative AI, she put up slide after slide that played on the worst tropes about AI—how it was a Stochastic parrot, how it was just a cheating machine, how students would never learn writing because it suppressed their critical thinking. As she talked, knowing what I know about teaching writing with Generative AI, I knew that she knew very little about what she was talking about. First, she was at least a year behind (an age in the world of AI), and she was carefully cherry-picking her examples, her sources, and her information. But she was just getting started. This is a slide from her address:

She posited that GenAI is only one step from General Artificial Intelligence (AGI) and that AGI was in place to promote eugenics and white supremacy. I wanted to walk out, but I couldn’t look away. Her talk was like a wreck on the side of the highway. I was fascinated by the gruesome brutality and bloodletting, the shattered glass, the bent metal of her argument. I watched the long-haired and bearded man in front of me smile from ear to ear as he high-fived his compatriots in his row. I could feel the anger welling in my throat, but I stayed silent. I wanted to see this through, identify every ring in this macabre circus of well-insulated and privileged academese spilling from her throat in globules of effervescent bullshit.

She complained that Generative AI had taken the work of writers and scholars unlawfully and that it was built upon the work of people who had never been compensated. I thought about those years she probably spent on Facebook, Twitter, Google, and all those free blog sites she likely used to spread the rhetoric of her greatness around, all those sites she never paid for. Did she think there was no reckoning, no payment required in the end? As early as 2010, my big data buds were telling me that data was the new oil, that all that stuff on social media was being scraped up. I didn’t know what it was being scraped for—but the message was clear: “If the service is free, YOU are the product.” Does she think she should be compensated, but not all the companies she climbed up on her way to the podium?

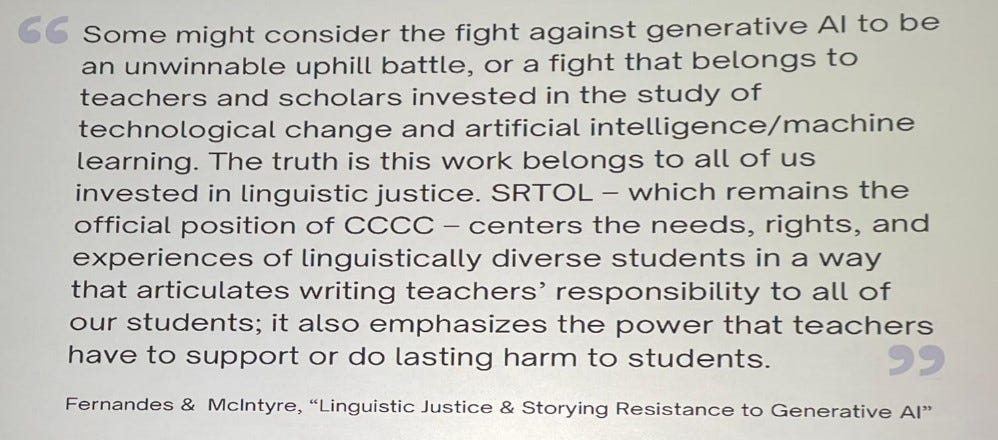

Then, she made the allegation that Generative AI was not representative of the language of linguistic minorities and that it would destroy their history and their linguistic diversity. She referred to this as “linguistic justice” and said that she stood with others who demanded that the dialects of our minority communities be respected and celebrated. Hmm, I thought. How much respect does the minority dialect of my student from Nepal or Sudan or the inner city get when they try to fill out a job application or even write a college essay to get into her esteemed university?

Where is her “linguistic justice” then? Does she give them credit for writing in their dialect in her composition courses, or does she teach them what they need to know about accessing the language of power to fulfill their dreams? Who is she to tell them they need to prioritize “linguistic justice” over their college and careers? Had she ever spoken to one of these kids who is trying to get from MLK Boulevard to Georgia Tech, or from Kazakhstan to Kennesaw State? Those kids will use Generative AI anyways, but without my help, they won’t learn to use it responsibly, ethically, and effectively. It didn’t sound like justice as much as it sounded like the soft bigotry of low expectations.

Then she rallied for “resistance” against AI in writing, while she showed slides of a book she was publishing with some academic friends and how she had founded an AI Resistance group. She even showed the QR code (probably produced with AI). Wow. She was definitely going to make a buck on this, I thought. So much for her pure motives.

What was even worse, I thought to myself as my eyes scanned the crowd of smiling, exuberant writing professors, she had given them an excuse, a new reason to ignore the pressing need to learn how to teach with AI. She had given them a list of academic (but not practical) reasons to sit in the wreck of their pedagogy, in shock, refusing to move as it burned around them. Each of those instructors she was goading on was the teacher of hundreds of students, and she was sentencing each of those students to a future where they would have no idea how to responsibly and ethically use AI.

As she finished her speech, the crowd around me rose to their feet and gave her a standing ovation. I sat mourning the moment.

It was one of the most ignorant, irresponsible, and privledged speeches I had ever heard. She wasn’t saving writing; she was condemning it. At this moment in time, writing teachers and the profession of English can position themselves to begin the hard work of preparing our students for a future with AI. Or not.

We can teach our students to use Generative AI responsibly. Or not.

What we do at this moment determines whether the field of writing instruction survives. Or not.

FWIW, The CCCCs was not all anti-AI— your workshop, some of the talks I went to, and the talk I gave, which was specifically about the need to pay attention to AI rather than refuse or resist it. https://open.substack.com/pub/payattention2ai/p/considering-current-ai-writing-pedagogies?r=m75ih&utm_campaign=post&utm_medium=web&showWelcomeOnShare=true

How exactly is it inaccurate to say that LLMs facilitate linguistic homogenization and injustice? This has been demonstrated by Bender et al., as early as 2021, and others including Antonio Byrd, Alfred Owusu Ansah, and Carmen Kynard have written about how linguistic injustice is embedded in these technologies. What evidence refutes the claim Sano-Franchini shared? In what ways, did her talk evidence that she's "a year behind," as you suggested?